Artificial intelligence (AI) algorithms can identify race from imaging data alone. The study, released as a preprint last summer, raised concerns over racial bias in radiology, was recently published in Lancet Digital Health.

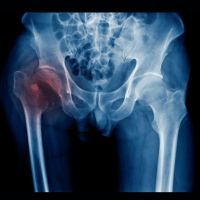

The original preprint showed that deep-learning algorithm models could accurately identify a patient’s self-reported racial identity from radiology images across multiple modalities, including chest X-rays, mammography, limb radiographs, cervical spine radiographs, chest CT data, and even low-quality images, even when clinical experts could not. Furthermore, the information used by AI systems to determine race could not be readily isolated. The final version of the study, after peer-review, which required additional experiments, still comes to the same conclusion.

The researchers, led by Dr Judy Gichoya from Emory University, trained and validated a deep-learning model on public and private datasets. No race (Black, Asian, White, Unknown) was predominant across the datasets. Initial training and validation were done on chest x-rays before examining the AI’s performance in other modalities. Race could be determined even when accounting for body habitus, age, tissue density, or other potential imaging confounders like a population’s underlying disease distribution. In chest x-rays, even when differences in anatomy, bone density, and image resolution were considered, the AI could still detect race.

The problem raised by determining race using image data alone is that this information can be misused to perpetuate or even worsen the well-documented racial disparities that already exist in medical practice, even if racial data is removed. Simply removing self-reported race information from medical imaging data is insufficient to obscure race data.

Sources: The Lancet Digital Health, ARXIV

Image credit: iStock